Cube5 AI builds tools for businesses that live inside complex documents. Not the kind you can copy-paste into a chatbot. Think 500-page corporate annual reports, dense technical specifications, or the credit memos that large banks build before approving a loan. The core idea: instead of shredding a document and feeding it to an AI, bring the AI to the document. Embed it on the surface, connect it to organizational context, and let analysts interrogate it there.

That’s the product. But what Greger Ottosson, Cube5’s co-founder and CEO, is doing inside his own company is equally interesting. Cube5 runs its own operations as an agentic business, with a workflow built to adapt around what each client actually needs.

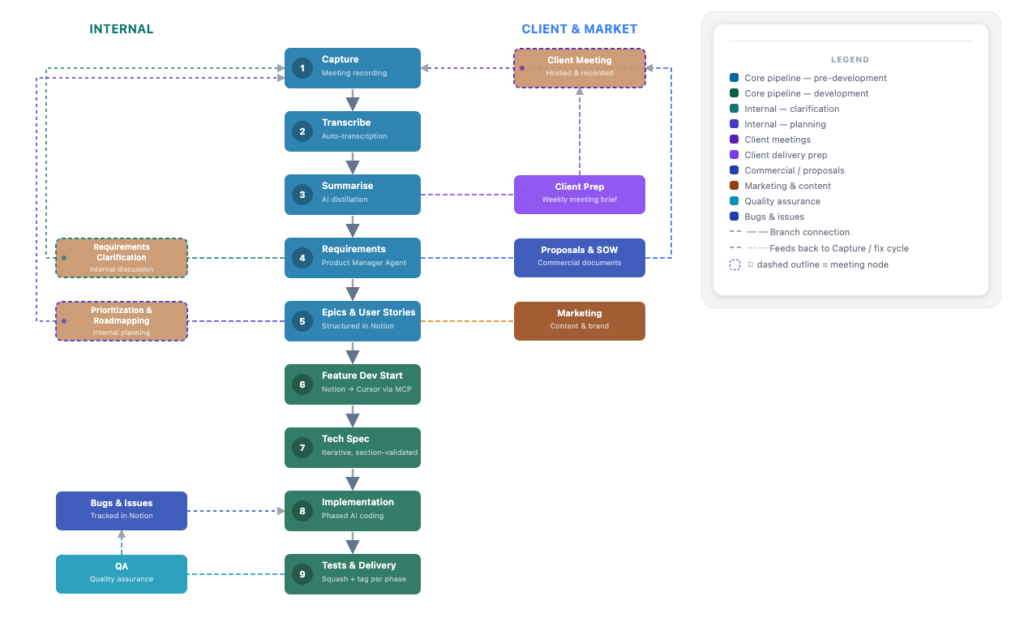

Inside Cube5’s internal workflow

The workflow starts with a recording. Every external client call gets captured (unless a client opts out), and so do internal meetings. Generic transcription tools handle the first pass: who said what, action items, a summary. Then Cube5’s own product manager agent takes over.

This is where generic ends and specialized begins. The PM agent isn’t a general-purpose model. It’s infused with Cube5’s existing product, roadmap, and feature set, so when it pulls requirements from a call transcript, it understands how a new feature would sit alongside what already exists. It drafts epics and user stories. Follow-up review sessions get recorded too, and fed back in to refine them.

At this point, no one has written a line of code.

Two more agents run before that happens. The marketing agent, which knows Cube5’s positioning and messaging, fires in parallel with the PM agent. Greger is direct about what that produces: marketing agents tend to embellish. They add “floral language,” as he puts it, and occasionally over-promise. The output gets reviewed. But the value isn’t in taking it verbatim. It’s a forcing function for articulating what the feature is worth before anyone starts building it. Think Amazon’s “working backwards” press release, generated before the product exists.

The proposal agent runs in parallel. For client work, it generates scope, schedule, and pricing. That proposal goes back into a meeting with the client. The meeting gets recorded. Requirements update. Epics refresh. The loop is deliberate.

Once a proposal is signed off, or a release target is set, the work shifts from product thinking to solution architecture. A technical spec gets generated: new modules, database schemas, API interfaces, class structures. Still no code. But the chief architect takes more ownership here, as the technical detail sharpens and the decisions start to have real downstream consequences.

Human review here isn’t someone reading a document and typing corrections. Greger’s approach: tell the agent what’s missing, let it make the change, then review those changes like a track changes document. Approve, reject, or iterate. Different reviewers bring different agent contexts. The PM agent looks for feature fit. The architect’s context flags technical risk. Both humans and their respective agents review the same content from different angles.

The skill, Greger says, is learning when to skim and when to dig. “If AI output is fed into another agent and fed into another agent, you’re gonna drift off,” he says. “The error tends to multiply.” A simple, incremental change to an existing feature doesn’t need line-by-line review. A new module touching multiple systems does. Getting that judgment right is what’s changed most about how he works over the past year. A year ago, his instinct was to read first and ask the AI if he needed help. Now it’s AI first, step in where it matters. He’s still calibrating.

The human in the loop isn’t disappearing. But where they sit in the loop, and how they spend their attention, is changing.

Part 2 covers how the app is actually built. Coming Soon!